Building Effective, Sustainable, Practical Assessment

Notes from the Library Assessment Conference

At the 2016 Library Assessment Conference, we had the opportunity to attend sessions on how to demonstrate the value of libraries, methods for data collection, analysis, and visualization, designing library spaces, and organizational issues facing the community. The conference, which attracted over 640 attendees, focused on building effective, sustainable, practical assessment. A number of themes that ran across the conference resonate with our ongoing work at Ithaka S+R.

Data visualization

There was an overwhelming amount of interest from both presenters and attendees in improving how data— especially that for collections, faculty outputs, and survey responses— can be visualized effectively using Tableau. A sizable number of conference sessions and posters focused on data visualization, and there was an at-capacity pre-conference workshop on getting started with Tableau. In between sessions, attendees shared their experiences and best practices for using Tableau.

In our session on the Ithaka S+R Local Surveys and Research Support Services projects, Starr Hoffman and Ashley Hernandez-Hall from the University of Nevada Las Vegas and Joyce Chapman from Duke University spoke about the process of transforming spreadsheets of survey responses from faculty members and students into a format that can be imported into Tableau and about their experiences sharing these visualizations with staff in their libraries. The dashboards they have created allow library staff to filter responses based on respondents’ college or school, disciplines, rank, age, and many other demographic items, and have been used widely within their libraries.

Ebony Magnus from SAIT Polytechnic, who presented on her data visualization of reference transactions, shared really valuable advice for attendees thinking about engaging more deeply with Tableau: (1) just because you can make a visualization doesn’t mean you should, as your visualization should be shaped by what you’re trying to examine or convey, (2) different dashboards should be created for different audiences, as they will be interested in different views and subsets of the same information, and (3) pie charts are never an effective way to visualize information.

Motivating factors: assessment vs. advocacy

As Lisa Hinchliffe from the University of Illinois at Urbana-Champaign explained in her keynote, Sensemaking for Decisionmaking, there is a distinction between library assessment and advocacy, and the two can require very different approaches; with assessment efforts, data needs to be gathered and analyzed thoughtfully and deeply, while with advocacy, information should be summarized and condensed for other stakeholders. (However, in both endeavors, it’s crucial that we make evidence-based decisions, and not decision-based evidence; that is, we do not attempt to draw conclusions and then find evidence to back up those conclusions.) The tension between these two endeavors—assessment and advocacy—was echoed throughout the sessions.

Lise Doucette from Western University presented on the political, economic, and values-based motivators of assessment work, having analyzed proceedings from previous Library Assessment Conferences. Doucette observed notable patterns in the purpose of the assessment endeavors; they were often focused on proving the library’s value externally or improving the library’s spaces, resources, and services internally. About half of the papers were focused on proving, while over 90% aimed at improving the library, and about a third were motivated by both. Doucette advised that before beginning assessment work, one should examine what values and goals are motivating this work, what certain types of results will indicate, and how results be used.

Mark Emmons from the University of New Mexico and Carol Mollman from Washington University in St. Louis presented on the proficiencies developed by the ACRL Task Force on Standards for Proficiencies for Assessment Librarians and Coordinators. These proficiencies included both data collection and analysis (understanding of best practices for efficient and sustainable data collection, management and storage) and advocacy and marketing (expanding the voice of the library in arenas that can impact the institution). Again, this session returned to the theme of balancing one’s role in advocacy and assessment–how can we best champion the library while also maintaining objectivity and making evidence-based decisions?

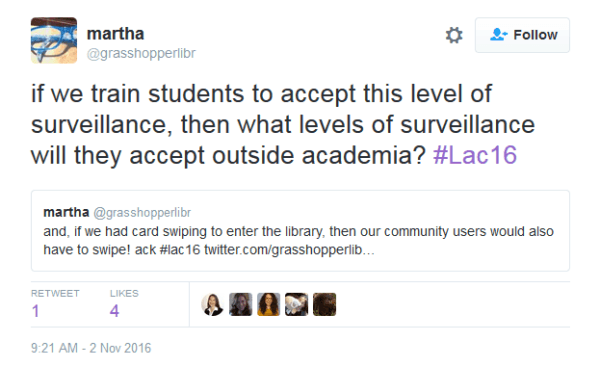

Respecting student privacy

Many sessions focused on the sophisticated analyses of the relationship between student usage of the library and success outcomes: the University of Minnesota and Grand Valley State University in particular have undertaken such projects tying library usage to increased retention, decreased time to graduation, and higher GPAs.

These presentations raised questions about students’ awareness of how their personal data is being used and potential ethical implications:

- Are libraries, and institutions more broadly, obtaining explicit consent for the use of these data? And who determines when one does or does not need explicit consent?

- In cases where libraries are collaborating with institutional research offices, to what extent does the library have access to students’ personal information?

- And at what point are we collecting too much data on student usage of the library?

Earlier this year, Ithaka S+R hosted a convening in partnership with Stanford University’s Center for Advanced Research through Online Learning (CAROL) to catalyze discussion, create resources, and begin to build a set of principles and a framework for governance of responsible use of student data.

Four basic principles of responsible use of student data in higher education were developed out of this initiative, including: (1) a shared understanding of the basic purposes and limits of data collection by instructors, administrators, students, and third party vendors, (2) transparency and clear representations of the nature and extent of information describing students that is held by their institution and third party organizations and how this information is being used, (3) an obligation that institutions study student data to make informed improvements, and (4) an imperative that education should enable opportunity and open futures. These principles can certainly be applied to the use of student data in these studies of library value and impact.

Deepening and expanding assessment research

Another emerging issue highlighted in the conference focused on how to encourage deeper, more expansive models for assessment to move beyond the more typical practice of assessment as single-sited, institution-specific research developed on a project-by-project basis. Several presentations on the “data” panel contended not only with how systematic, centralized approaches to collecting and storing data enable better understanding and quality control of data collection, but also with how more streamlined approaches can free librarians to focus on data analysis over the logistics of data collection, which facilitates more sophisticated usage of multiple data points.

Beyond deepening analysis at the individual institutional level, there is also the potential for cross-institutional collaboration and longitudinal research. For instance, the University of Minnesota and Grand Valley State University correlated student success to their library use by gathering data that tracked students over the course of their university careers. The “Day in the Life” study tracked student space use practices through multi-sited collaborative research at the University of Colorado (Boulder), City University of New York (City Tech and Borough of Manhattan Community College), Gustavus Adolphus College, University of North Carolina (Charlotte) and Indiana University-Purdue University Illinois.

Our session also featured two talks about the Research Support Services program, in which we facilitate large-scale multi-institutional cohorts of librarian researchers to examine the research activities of scholars by discipline. We are currently fielding projects on Religious Studies, Agriculture, and Public Health. Danielle highlighted the program’s underlying methodology, and Jenifer Gundry and Virginia Dearborn of Princeton Theological Seminary shared their insights as one of the 18 local research teams that collaborated on the Religious Studies project.

With all of these topics in mind, we’re giving additional thought to a few over-arching themes and thinking towards what we see as future issues to watch:

Data: While only one session was explicitly dedicated to “data” (in contrast to multiple sessions on services, space, learning, and organizational issues), this theme permeated the conference. Assessment librarians are not only contending with how to manage data collection and make their analyses more effective through visualization, but also with the privacy implications of their data collection. As assessment in libraries continues to grow and evolve, professionals in this area will continue to grapple with the issue of how assessment data can be shared with others for comparative research, ideally openly. Sharing data beyond the individual institution has great potential to deepen assessment research, but also complicates the issue of data collection and privacy exponentially.

Meaning and aims of assessment: The growth of the conference (from 200 in 2006 to over 600 registrants in 2016) is a testimony to the growth and influence of assessment pursuits in academic libraries more widely. With this growth comes the need for greater reflection about the means and aims as to why assessment research is conducted. An underlying current is the limit— or boundaries— to what assessment research can and should be. How much further will library assessment research expand in terms of what is studied, how, and by whom? In this resource-challenged era of higher education, will the assessment community disentangle assessment from advocacy or further develop assessment as a mechanism to prove the value of libraries?

Pingbacks

Advocacy by librarians – is there a tension with assessment/analysis?